GPT-3 has been the talk of the town. It is a deep learning-based language model that helps you with instant human-like text. The chatbot is trained to provide answers accurately based on the knowledge feed provided.

It requires inputting small text to generate large datasets, such as code, poems, stories, FAQs, articles, and more.

The depth of understanding and sheer knowledge it seems to possess is remarkable. With its continuous development, GPT-3 is soon going to have a huge impact on the future of conversational AI.

However, any software that is impressive does not mean it is flawless. It has its own limitations. Though it is not a complete project and is much a work in progress, it is important to analyze where it lacks technically from the user experience point of view.

Let’s find out in our next section.

Table of Content

Limitations of GPT-3 Chatbots Explained

Exploring Chat-GPT 3 was quite impressive despite its limitations. Its generative capabilities work like a search engine. With a couple of interactions with a few language models, we could find many limitations of GPT-3.

All those who are not aware of OpenAI can read our blog on what is OpenAI before moving further to its limitations.

Here are the major limitations related to the technical aspect and the user experience of the chatbot.

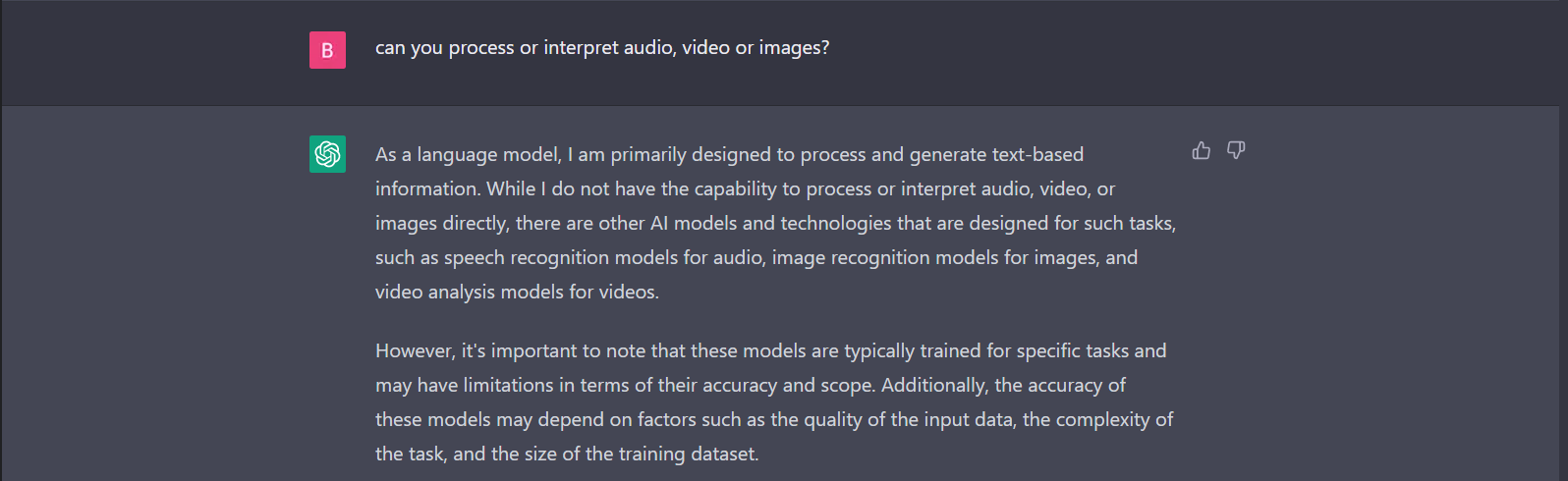

1. Natural language processing

GPT-3 chatbots do not understand human-like conversational text or words. Instead, generating a correct answer like humans becomes difficult. It sometimes provides irrelevant output code or answers due to a pre-trained model. The output that it gives lacks the perfect understanding of the words. However, due to the language model used, the chatbot is restricted from answering questions correctly.

2. Uncertainty of input leads to ambiguity

Chatbot is not similar to Google search engine, where you can throw any questions to get accurate answers. To generate text from the chatbot exactly the way you want is only possible if the language you use is certain. Be specific with the questions and language you use, as the chatbot will struggle with complex questions resulting in response errors.

3. Generating text with inappropriate answers

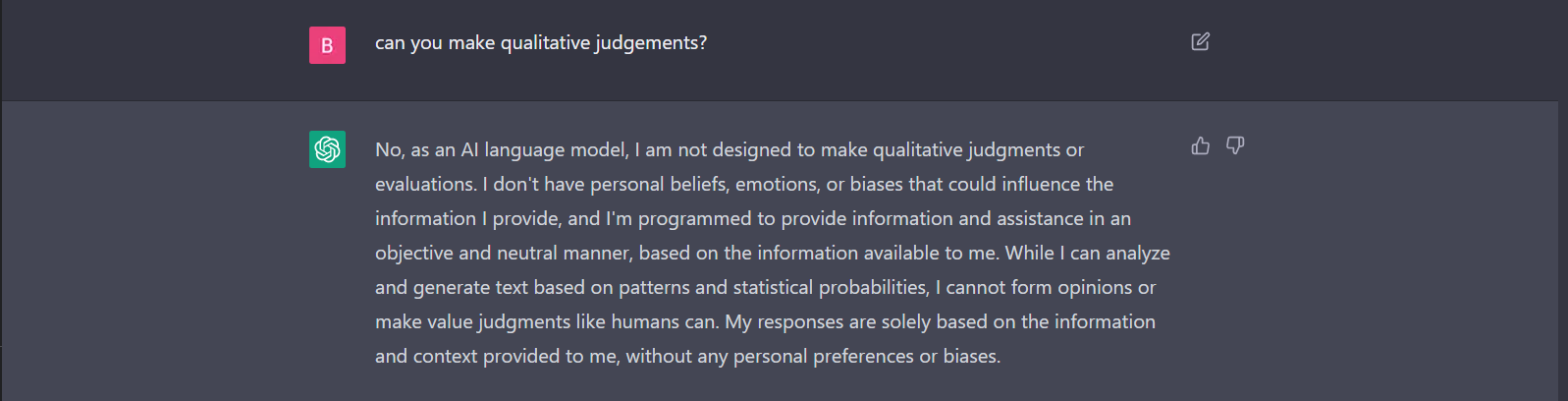

GPT-3 chatbots cannot create human emotions and can sometimes provide insensitive responses. Its language model is not trained according to the world’s emotions or what a human can think like. This can lead to frustration for the users, and they look for other alternatives that have a wider range of possibilities.

4. Lack of fine-tuning

While GPT-3 chatbots can generate impressive examples and answers based on existing language models, they do not have the capability to think beyond what is trained. This limits innovation and creativity when required.

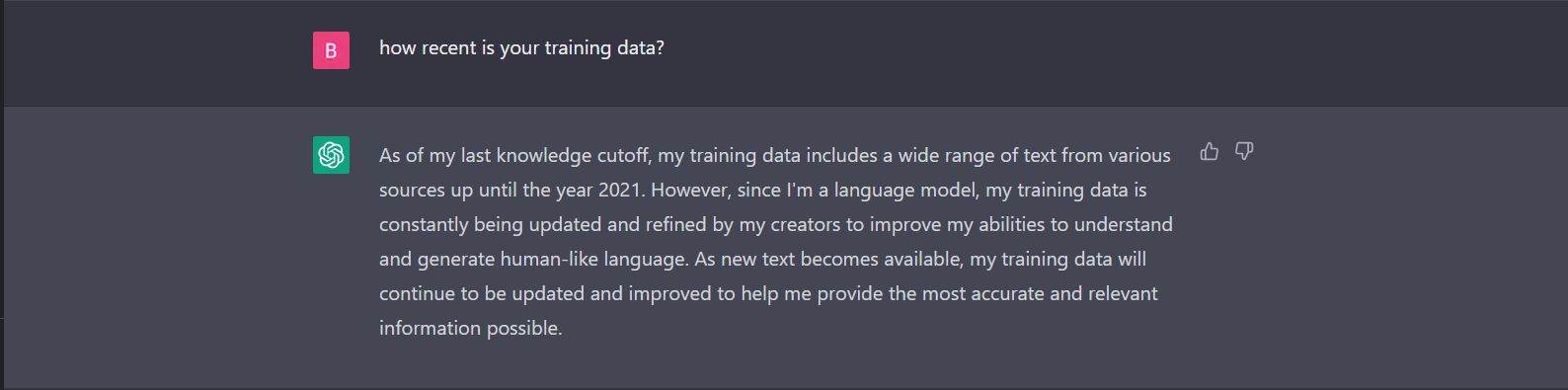

5. Dependency on already trained models

GPT-3 chatbots require a large amount of data to train the model and function perfectly. This will give chat the ability to adapt to new situations and provide information. If the chatbot is not trained well, the struggle to provide the right context to the users will be of no use.

6. Inability to add personalization

These chatbots have difficulty personalizing responses to users, which leads to conversations that may not be useful. The rules pertaining to the training data do not provide human-like text. This is particularly to complex situations where users seek advice or personalized recommendations.

7. Inability to learn from the conversation

GPT-3 chatbots lack the ability to learn from user feedback and adapt their responses accordingly. This means that they may continue to provide inaccurate or unhelpful responses even after receiving feedback from users.

8. Vulnerable to trained data

GPT-3 chatbots can be vulnerable to bias, particularly if the training data used to train the model contains biased or discriminatory content. This can lead to inaccurate or offensive responses that may harm users.

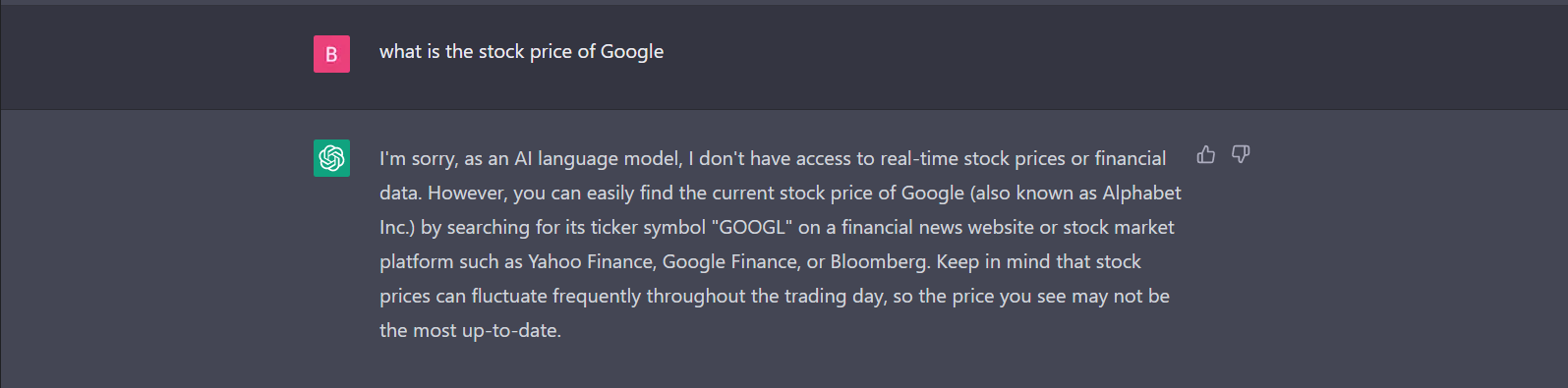

9. Limitations to generating internet text

GPT-3 chatbots may struggle to handle complex tasks that require multiple steps or interactions. This can limit their usefulness in applications such as customer support or financial services, where users may require assistance with more complex tasks.

10. Lack of research on real-world experiences

GPT-3 chatbots lack real-world experience, which can limit their ability to provide relevant and accurate responses to users in certain situations.

For example, they may struggle to provide advice or guidance on topics that require practical experience or expertise, such as medical advice or legal assistance.

To avoid these limitations and leverage the benefits of GPT-3, you need to learn how to use GPT-3. Apart from the above limitations, there are a few technical issues with GPT-3. Let us understand each of them below.

1. Delay in providing a response

GPT-3 chatbots can sometimes take time to respond to answers, particularly if the model is running on a slow or underpowered server. This can lead to delays in the conversational flow and a poor user experience.

2. Limited control over the language models

GPT-3 chatbots have less control over the model’s output, which can make it difficult to ensure that responses are relevant and appropriate for a given context. This can be particularly problematic in situations where users need instant answers to difficult situations or questions.

3. Cost to maintain or add new features

GPT-3 is a resource-intensive model that requires significant computing power and storage capacity to run effectively. Lack of adapting to future technology may not allow adding new features. However, upgrading to new tech can make it expensive to deploy and maintain GPT-3 chatbots, particularly for smaller organizations or individuals.

4. Reliability on various platforms

GPT-3 chatbots require integration with third-party platforms. OpenAI’s API or other machine learning services will help in creating dependency on these platforms and decrease the flexibility of the chat functionality.

5. Limited use of training data

GPT-3 chatbots require large amounts of high-quality training data to perform effectively. However, training data can be difficult to obtain or may not be available for certain tasks or applications, which can limit the chatbot’s usefulness in these contexts.

Overall, GPT-3 has several benefits, but there are still limitations associated with the chatbot. It’s important to consider all the above limitations while deploying GPT-3 into various applications. You can also explore the top GPT-3 alternatives and compare them with GPT-3.

Improving these will surely make the application a fine tune artificial intelligence that gives prompt responses like humans.

FAQs

A GPT-3 chatbot is an AI-powered chatbot that uses natural language processing of the GPT-3 few-shot learning and zero-shot learning language model to generate human-like responses.

GPT-3 chatbots generate a wide range of AI-trained conversations that includes answers to user queries, providing product recommendations, FAQs, booking appointments, routes, and so on. They can also be used to write articles, poems, and titles.

Although GPT-3 is advanced, the tool has limitations like inappropriate responses, struggle to deliver complex tasks, lack of domain knowledge, and difficulty in understanding human language and tone and humor.

Yes, there are ethical concerns around the use of GPT-3 chatbots, particularly around issues such as privacy, bias, and the potential for misuse.

For example, there are concerns that GPT-3 chatbots may be used to spread misinformation or to manipulate users into making decisions that are not in their best interests.

Yes, GPT-3 chatbots can be used in industries beyond customer service, such as healthcare or education.

For example, they can be used to provide personalized health advice, assist with medical diagnosis, or deliver educational content to students.

GPT-3 chatbots are highly advanced and can generate coherent and contextually relevant responses. However, they still have limitations and may not always perform as well as other types of conversational AI, such as rule-based chatbots or voice assistants.

GPT-3 chatbots learn and improve their responses through a process called deep learning, in which they are trained on large datasets of human language. This training allows the chatbot to learn patterns and relationships in language and use that knowledge to generate coherent and contextually relevant responses.

Conclusion

A version of OpenAI’s GPT (Generative Pre-trained Transformer) language is known as Chat-GPT. Chat-GPT is designed to generate human-like text based on input, similar to other language models. It can generate a wide range of responses to diverse prompts and questions since it was trained on a sizable text dataset.

However, there are limitations with GPT-3 and certain other language models. They are restricted by the fact that they cannot access the internet or other sources of knowledge. Resulting in inaccuracies of responses on the information as they are only trained with the specific knowledge base. Furthermore, language models may not always give results that are totally accurate.